Nvidia invested $2 billion in Marvell Technology, the data center chip designer based in Santa Clara, as part of a strategic partnership spanning multiple chip market segments. The investment targets Marvell's interconnect technology capabilities, which handle the critical data pathways between processors in AI systems.

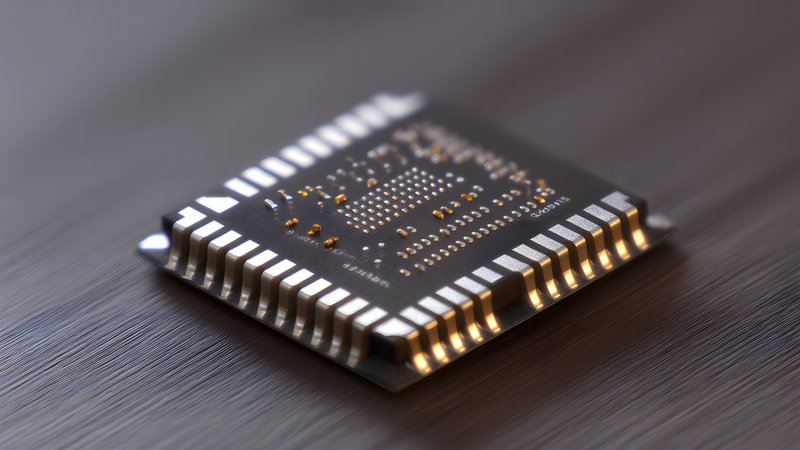

This move signals Nvidia recognizes that raw compute power isn't enough anymore — the bottleneck has shifted to moving data efficiently between chips and systems. As AI models balloon in size and training clusters grow to tens of thousands of GPUs, the interconnect layer becomes mission-critical infrastructure. Marvell's expertise in data center processors and networking chips positions them as a key player in solving what's becoming AI's most expensive infrastructure challenge.

The $2 billion figure suggests this isn't just a financial investment but a strategic bet on controlling more of the AI stack. While the original reporting lacks details on specific technical collaboration or product roadmaps, the timing aligns with industry-wide concerns about bandwidth limitations in large-scale AI deployments. Without additional sources providing competing perspectives, we're left with limited visibility into potential regulatory implications or competitive responses.

For developers building AI applications, this partnership could eventually mean better performance and lower costs for distributed training and inference workloads. But don't expect immediate benefits — hardware partnerships like this typically take 18-24 months to produce shipping products that matter for production deployments.