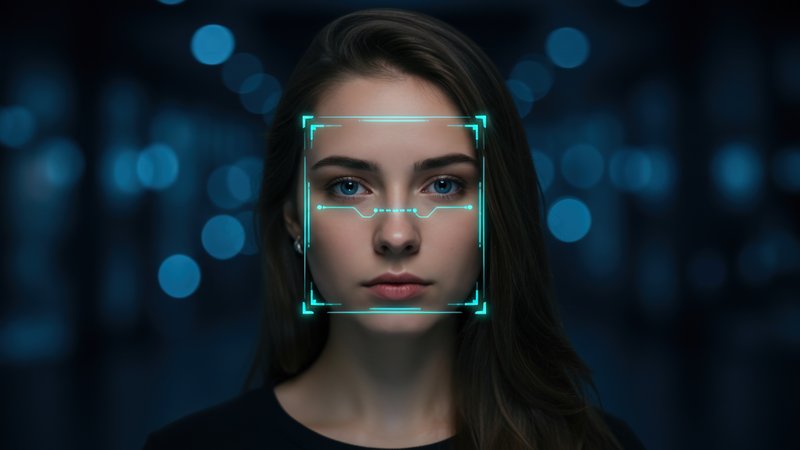

OkCupid gave facial recognition company Clarifai access to nearly 3 million user photos plus location data without telling users or getting consent, the FTC revealed in a settlement announced yesterday. The dating app's parent company Match Group agreed to permanent restrictions on data sharing but pays no financial penalty for the 2014 incident. Clarifai, which markets AI services to "military, civilian, intelligence, and government" customers, received the data with zero contractual restrictions on how it could be used.

This case exposes how AI training data gets harvested from consumer apps through backdoor deals users never see. While facial recognition companies publicly talk about ethical AI, they're quietly building datasets from dating profiles, social media, and other personal sources. The FTC found OkCupid directly violated its own privacy policy, which promised not to share personal information without user notification and opt-out options. What's particularly concerning: OkCupid "took extensive steps to conceal" the data sharing and tried to obstruct the FTC investigation.

The zero-penalty settlement reflects the Trump administration's lighter enforcement approach—both Democratic FTC commissioners had been fired, leaving Republicans in full control. This sets a troubling precedent where companies can secretly feed user data to AI firms, get caught years later, and walk away with just a promise not to do it again. For developers building with facial recognition APIs, this highlights the murky provenance of training data from major providers.

If you're using Clarifai or similar services, ask hard questions about data sources. The photos powering your AI models might come from dating apps, social networks, or other consumer services where users never consented to AI training. The FTC's weak response here basically green-lights this practice as long as companies eventually promise to be more transparent.