A quiet March 2026 FTC settlement is producing a loud consequence this week: Clarifai deleted three million photos it obtained from OkCupid in 2014, plus every model trained on them. Reuters broke the story, TechCrunch picked it up. The data-sharing arrangement started when Clarifai founder and CEO Matthew Zeiler emailed a colleague: "We're collecting data now and just realized that OKCupid must have a HUGE amount of awesome data." OkCupid executives held equity in Clarifai at the time, which is the kind of conflict of interest that looks worse in 2026 than it did in 2014.

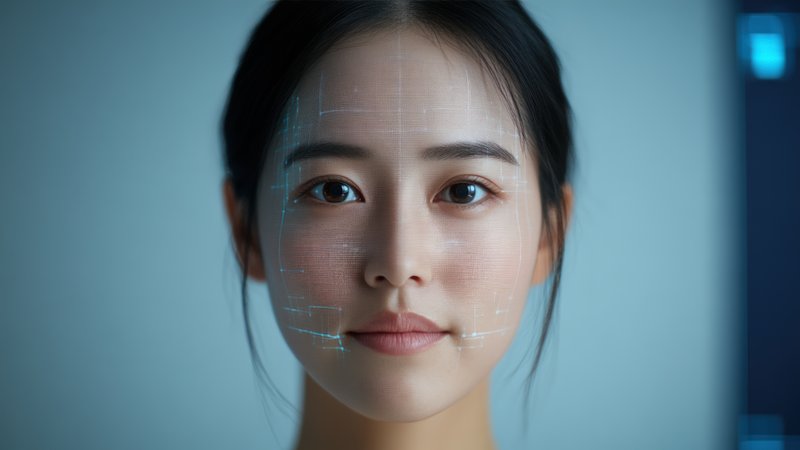

The consequences on the AI side are more interesting than the photo deletion itself. Three million face photos is a useful training set but not an unprecedented one; Clarifai has plenty of data. The FTC agreement also deleted every model trained on the OkCupid data, which is a different and much harder category. Model deletion in 2026 means weights gone, embeddings gone, fine-tunes derived from those models gone, plus whatever derivative classifiers or customer deployments depended on them. A 2019 New York Times article originally exposed that Clarifai had built tools estimating age, sex, and race from faces using this dataset — those are exactly the kinds of models that generate downstream deployments, and that is where the cleanup gets expensive and incomplete in practice.

Two legal points worth naming. First, the FTC could not impose a financial penalty. This is a "first-time offense of this type" under their governing statute, and they can only require compliance and prohibitions. OkCupid and Match Group are permanently prohibited from misrepresenting or assisting others in misrepresenting how data is collected and shared. They did not admit to the allegations. Second, the twelve-year gap between the 2014 data grab and the 2026 consequence is a reminder that training-data liability runs on long timescales. The 2019 NYT story triggered the FTC investigation; the March 2026 settlement produced the actual deletion this month. If you train on user data today, the clock starts now, and the half-life is longer than most models you ship.

Two things to register for builders. One, the "delete the models, not just the photos" outcome is the emerging regulatory template. This is what GDPR-style right-to-erasure actually looks like when applied to ML systems. Your data lineage documentation (which model was trained on which dataset, which deployment uses which model) is now a legal artifact, not a governance nicety; if you cannot produce that lineage on a regulator's timeline, you will end up deleting more models than you have to, defensively. Two, executive cross-holdings between data-generating companies and AI-training companies are now a concrete liability class. Zeiler's email was not damning because it was crude. It was damning because OkCupid executives had equity in Clarifai, making the data-sharing look like self-dealing rather than a legitimate integration. The "trust us, we have a privacy policy" posture is not holding up legally when the investments and emails tell a different story.