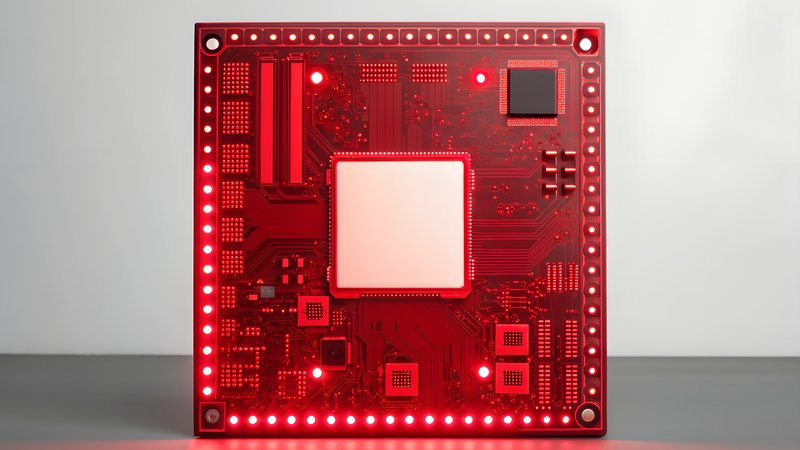

Meta announced a massive expansion of its partnership with Broadcom, committing to deploy one gigawatt of custom AI processors — enough to power a small city. The deal extends their existing collaboration on Meta's in-house AI accelerators, signaling the company's bet on custom silicon over off-the-shelf GPUs for training its next-generation models.

This move highlights a critical shift in AI infrastructure strategy. While the industry obsesses over GPU availability, Meta is solving a different bottleneck: interconnect bandwidth. As models scale into the trillion-parameter range, the real constraint isn't compute power but how fast chips can talk to each other. Meta's custom processors, designed specifically for its workloads, can optimize both the silicon and the communication protocols.

Other reporting reveals this is part of a broader infrastructure arms race. Meta separately inked a $6 billion deal with Corning for optical fiber and high-density connectivity — the "copper wall" problem that limits how fast electrical signals can move between processors. Meanwhile, conflicting reports suggest OpenAI is also exploring custom chip partnerships, though with different vendors and strategies.

For developers, this custom silicon trend means the AI landscape is fragmenting. Meta's optimized hardware will likely perform best with Meta's models and frameworks, creating new platform dependencies. If you're building on Meta's infrastructure or using their open models, expect performance advantages. But it also means less portability and more vendor lock-in as each major player optimizes their entire stack from silicon to software.