Google DeepMind released Vision Banana Friday, a generalist vision model built by instruction-tuning Nano Banana Pro (the image generator backing Gemini 3 Pro Image) on a mixture of its original training data plus a modest amount of vision-task data. The technical claim is unusual. Rather than train separate heads for segmentation, depth estimation, and surface-normal prediction, Vision Banana parameterizes each task's output space as an RGB image and lets the base generator produce them directly. On Cityscapes semantic segmentation it reports mean Intersection-over-Union of 0.699, a 4.7-point absolute improvement over Meta's SAM 3 at 0.652. He Kaiming and Xie Saining, two of the most cited authors in modern vision research, are listed on the paper. The core thesis stated in the paper title is direct: image generators are generalist vision learners.

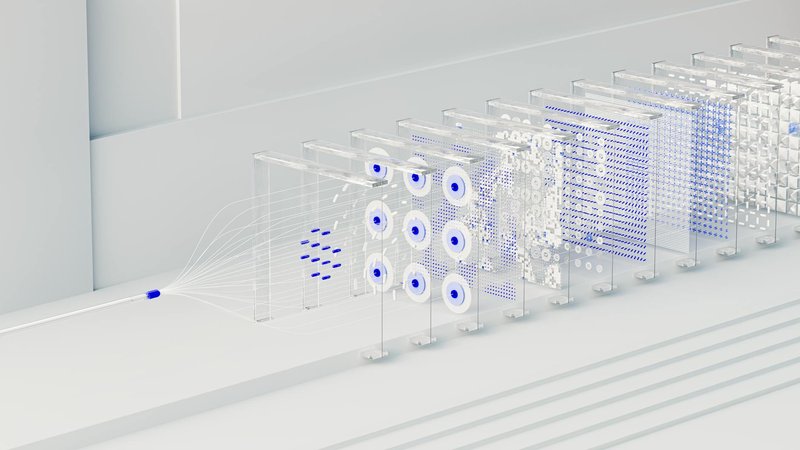

The architectural argument matters more than the headline benchmark. Classical computer vision has spent two decades building task-specific decoders: dense prediction heads for segmentation, regression heads for depth, classification heads for object detection. Each maps a backbone's feature representation to a task-specific output format. Vision Banana drops that scaffolding by representing every task output as an image and reusing the base model's image-generation pathway. Segmentation masks are RGB images. Depth maps are RGB images. Surface normals are RGB images. The model's capacity to produce coherent imagery is repurposed as the capacity to produce dense pixel-level predictions in any task that admits a pictorial representation. That trick is not new (Painter from Microsoft and SegGPT explored similar territory in 2023), but Vision Banana is the first instance where the underlying generator is at frontier scale and the resulting generalist beats domain specialists.

The ML research implication is that generative pretraining captures structures useful for discriminative tasks at a deeper level than the field generally assumed. SAM 3 is a heavily engineered specialist with concept-grounded segmentation and class-agnostic mask prediction; losing 4.7 mIoU points to a generalist is the kind of result that suggests the specialist architecture was not capturing something the generator already knew. That argument has been made for language since GPT-3, where generative pretraining outperformed task-specific NLP models on benchmark after benchmark. Vision Banana is the cleaner version of that argument for computer vision. If the result holds under independent evaluation across more datasets and modalities, the practical consequence is that the next generation of vision systems looks less like specialized pipelines and more like prompted image generators with task instructions.

For builders, the immediate impact is limited because Vision Banana is research, not a shipped product, and the model weights underlying Nano Banana Pro are not publicly released. The longer-term implication is more interesting. If image generation is genuinely a unified interface for understanding and production, the cost structure of building computer vision systems shifts. Today, a production CV pipeline often combines a backbone, several task-specific heads, separate training data and labels for each task, and integration glue. Vision Banana's framing collapses that into a single instruction-followable generator with task-conditional outputs. Building, for example, an autonomous driving perception stack on top of one such model would replace four or five training pipelines with one, and would let the system handle novel tasks (predict water depth, identify glare patches, etc.) by just prompting rather than retraining. That is conceptually clean. Whether it matches the engineering quality of specialist pipelines under safety-critical constraints is the next thing the research community will have to test.