SAP has acquired Dremio and Prior Labs in a single announcement, with a separate $1.17B commitment over four years to Prior Labs development. The strategic framing comes from SAP CTO Philipp Herzig: *"Enterprise AI doesn't stall because the models aren't good enough; it stalls because the data isn't ready."* That's the floor-vs-ceiling split that's been quietly defining enterprise AI for two years — frontier labs push the model-capability ceiling, but enterprises burn budgets on getting their tabular data into a state where any model can use it productively. SAP is buying both layers in one move.

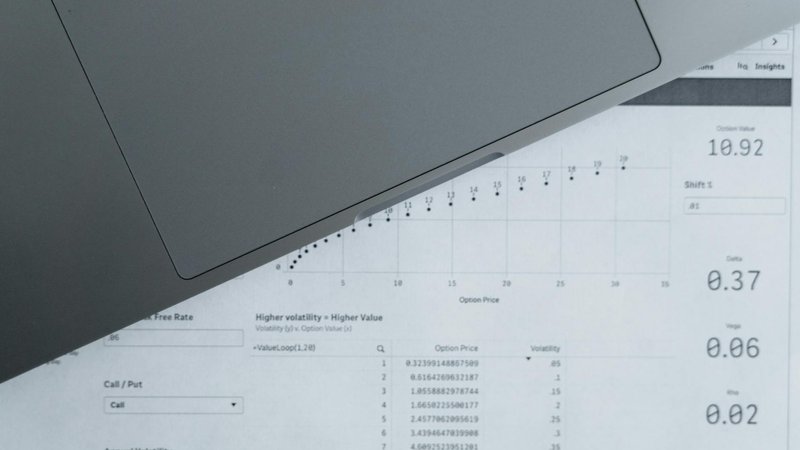

Dremio brings a data lakehouse with two open-source technologies that matter for the broader ecosystem: Apache Iceberg (columnar table format with version control, the emerging open standard) and Apache Polaris (metadata management for Iceberg tables). Plus a built-in AI agent that lets users query without writing SQL. Prior Labs brings TabPFN-2.5 — a transformer specifically optimized for tabular data, doing in-context learning rather than per-task training, capable of processing up to 100,000 spreadsheet rows per task. There's also a distillation engine that generates dataset-specific lightweight algorithms from the base model. Integration target is SAP's Business Data Cloud; HANA, Datasphere, and Joule integration paths weren't disclosed. No financial terms on the individual acquisitions; the $1.17B/4yr is the commitment to TabPFN development, not buyout price.

For builders, the structural signal is that tabular AI is the underserved frontier. Image, text, and code get the attention because consumer apps and developer tools are the marketing surface, but row-and-column data is where most enterprise data lives, and the model approaches that work for unstructured text don't transfer cleanly to tabular workloads. TabPFN's in-context-learning approach (no fine-tuning per dataset, just feed the table at inference time) is meaningfully different from the LLM-on-tables approach most enterprise vendors have been shipping. SAP betting $1.17B over four years on this team is real validation that tabular-specific transformer approaches are commercially viable, not just an academic thread. Watch for whether Snowflake, Databricks, and Microsoft Fabric counter-move with their own tabular-foundation-model plays.

If you build enterprise AI products, the practical implication is that the stack is bifurcating: frontier-lab models for unstructured workloads, tabular-specific models for structured workloads, and the lakehouse layer (Iceberg + Polaris + Delta Lake) becoming the standard substrate. If you ship retrieval against tabular data, TabPFN-style in-context-learning models are worth evaluating against your current LLM-with-SQL-tools approach — the latency and cost shape is meaningfully different, and often better for high-cardinality tables. If you maintain an open-source project in the lakehouse space, SAP committing this hard to Iceberg is bullish for that format's gravity over Delta Lake or Hudi. The real story isn't that SAP made acquisitions; it's that they articulated tabular AI as a separate stack worth $1.17B of dedicated investment.